5 (more) logical fallacies in the era of RFK Jr.

Last month I wrote about 5 logical fallacies that are trending right now in the world of health, and there was a resounding request for round two. So here you have it!

But first: why should you care? Learning to identify logical fallacies is a form of prebunking. There are SO MANY false health claims on the internet, and chasing them down one by one is not possible. Instead, if you learn can to recognize these common errors in reasoning and manipulative patterns, you can be prepared to discern unreliable information when you encounter it in the wild.

And research has shown prebunking works: teaching people logical fallacies helps them discern what information is reliable, and what is not.

Alright, now to the fallacies.

Anecdotal fallacy

The anecdotal fallacy occurs when people use their limited personal experience to make sweeping conclusions. Our personal experiences are important, and they guide many of our decisions. But they also often give us incomplete information because they only reflect one experience or point of view. The error in reasoning occurs when a person assumes their limited experience provides complete information and is enough to make much broader conclusions.

Examples of this fallacy in action:

- My child didn’t get vaccinated and they’re fine! (anecdote) Vaccines aren’t really needed. (broader conclusion)

- I stopped eating bread and felt better (anecdote) — gluten is causing so much inflammation in the modern diet. (broader conclusion)

- I started taking this new supplement and have so much energy (anecdote), it really works! (broader conclusion)

Appeal to emotion fallacy

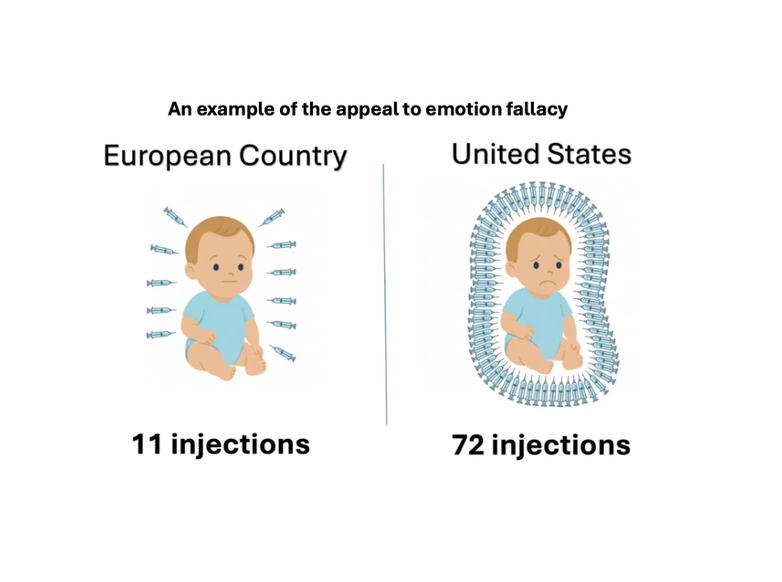

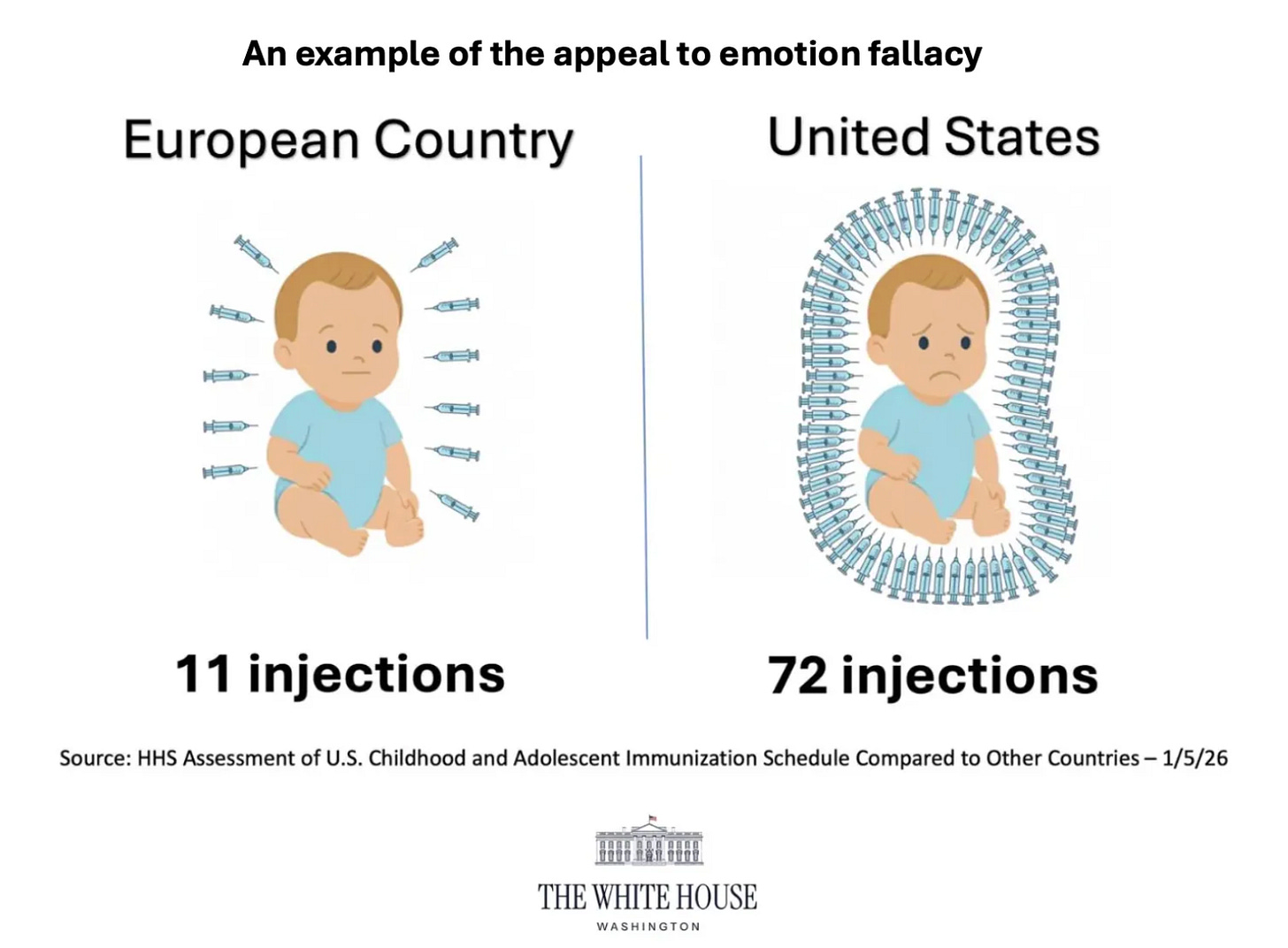

The appeal to emotion fallacy tries to win an argument not by providing evidence, but by distracting people with strong, emotionally charged language or imagery (such as fear, anger, or sadness).

The image below is a great example — it was used to try to convince Americans that children in the US receive too many vaccines. Instead of providing accurate data, the image triggers an emotional reaction by using images of lots of needles which feels scary, and by showing the US baby as unhappy (frowning).

This emotionally charged imagery distracts people from the fact that the message is inaccurate. US babies do not receive 72 injections, and the image creates a distorted view of immunization by only emphasizing fear of needles while ignoring the benefits.

Emotion, by itself, isn’t inherently wrong or invalid, and it can be used appropriately in health messaging. The error in reasoning occurs when emotion is used in place of a reasoned argument, or when it is used to distract from the fact that insufficient or inaccurate evidence has been provided (as was the case here).

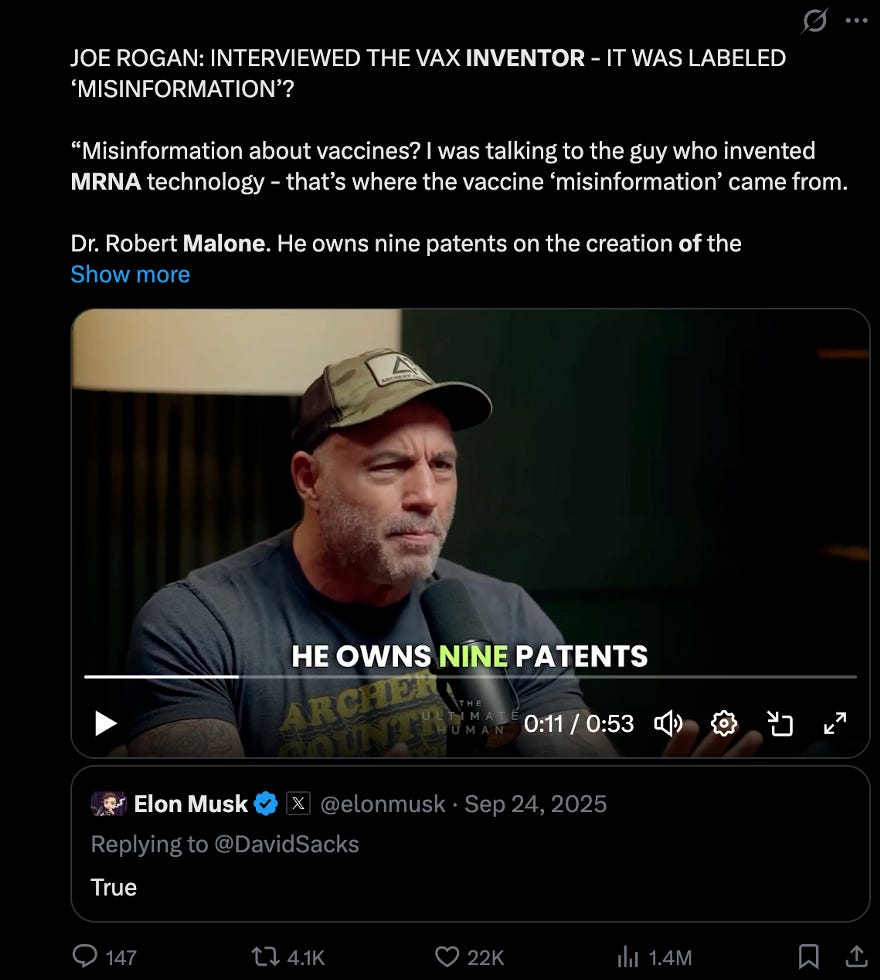

Appeal to authority

The appeal to authority fallacy says that authority figures (experts) are always right. But this is not always true; reality does not bend to the will or whims of experts. Right now I think this one is especially confusing, because the scientific community says “trust the experts!” but then when an expert says something a bit weird, they say “ignore them!”

In science, what ultimately matters is the quality of the data and analysis—not who is making the claim. Experts are often more reliable because they’re trained to evaluate evidence, which is why it usually makes sense to trust them.

But their credentials alone aren’t proof — people with MDs and PhDs have to provide data to back up what they’re saying. The error in reasoning comes from assuming that a person’s title or credentials alone are enough to say they are correct, without requiring they provide additional evidence to support their argument.

Examples of this fallacy in action:

- “Dr. Malone is the inventor of mRNA vaccines, he knows what he’s talking about!”

- “I’ve seen five doctors online recommend this supplement, it must work.”

- “A Harvard study showed tylenol during pregnancy is linked to autism, it must be true!”

Moving the goal posts fallacy

The moving the goal posts fallacy occurs when someone refuses to accept valid evidence in support of an argument, and instead changes their demands. This tactic makes it so an argument is never settled because the demands are constantly changing.

One of the most famous examples of this fallacy is the rumor around vaccines and autism. Back in the 1990s, the original argument was the MMR vaccine may be linked to autism. This was studied extensively, and no link was found. But instead of saying “oh that’s great!” the goalposts changed and the rumor lived on — next it was alleged it was actually thimerosal (a vaccine ingredient) that was causing autism. When studies found no link between thimerosal and autism, the demands shifted again. This has happened over and over again for the last three decades, turning what was once a valid hypothesis into an unfalsifiable rumor that is designed to never die. When moving the goal posts is used, no amount of data is ever deemed “enough.”

See this video on YouTube, Instagram, or Facebook.

Strawman fallacy

The strawman fallacy occurs when someone misrepresents an argument in order to make it easier to attack. Instead of engaging with what was actually said, they oversimplify or exaggerate it, making it sound more extreme or simplistic than it really is. This fake version (“the strawman”) is easier to knock down, creating the illusion of winning the argument. But in reality, the original point was never addressed.

Examples of this fallacy in action:

“You said vaccines are safe, but clearly they have side effects!”

- Real claim: “safe” means the benefits of vaccines outweigh the risks, and serious side effects are very rare

- Strawman version: “safe” means no risks of any side effects whatsoever

“Doctors just want you to take a pill for everything.”

- Real claim: Chronic health conditions are complex, and sometimes diet and exercise alone are the best approach, while other times medications may be needed.

- Strawman version: Doctors just want to push pills for conditions that are really caused by poor diet and lack of exercise.

Communication tips for talking about fallacies

Prebunking by teaching these logical fallacies can be an effective strategy for helping people recognize unreliable health information. Here are a few communication tips to keep in mind.

- The goal is not to make people feel stupid. EVERYONE uses and falls for these fallacies from time to time, and they are not a sign of lack of intelligence. The goal is to empower people to identify and resist manipulation tactics, not to make them feel stupid. In general, shame-based messaging does not work, and it won’t work here.

- Don’t just name the fallacy, explain the reasoning error. Naming the fallacy alone likely won’t help — the goal is to help people understand for themselves why the reasoning doesn’t hold up, so they can be equipped to identify the flaw in the future.

- Provide real-world examples when possible. Hypothetical examples can help, but real-world examples help people see and understand these manipulation tactics in the wild, setting them up to identify them in the future.

Stay tuned for part 3 of this series, where we’ll dive into other rhetorical tricks that are commonly used to spread false health information. Subscribe below to follow along!

Kristen Panthagani, MD, PhD, is completing a combined emergency medicine residency and research fellowship focusing on health literacy and communication. In her free time, she is the creator of the newsletters You Can Know Things and The Public Health Roundup. You can also find her on Instagram, Threads, and LinkedIn. Views expressed belong to KP, not her employer.