Did AI really beat ER doctors at ER triage?

Nope. A look at an interesting AI study that has led to some very overhyped headlines.

Yesterday a new study was published in Science comparing the diagnostic capacity of several AI tools to physicians. It made headlines for including something novel — they fed the AI tools and clinicians real-world information from the medical charts of ER patients and asked them to come up with likely diagnoses.

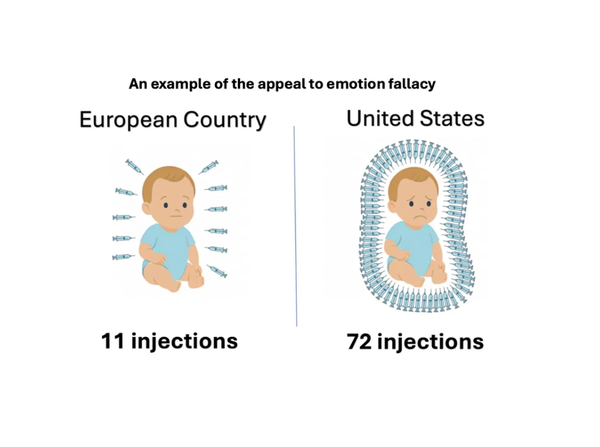

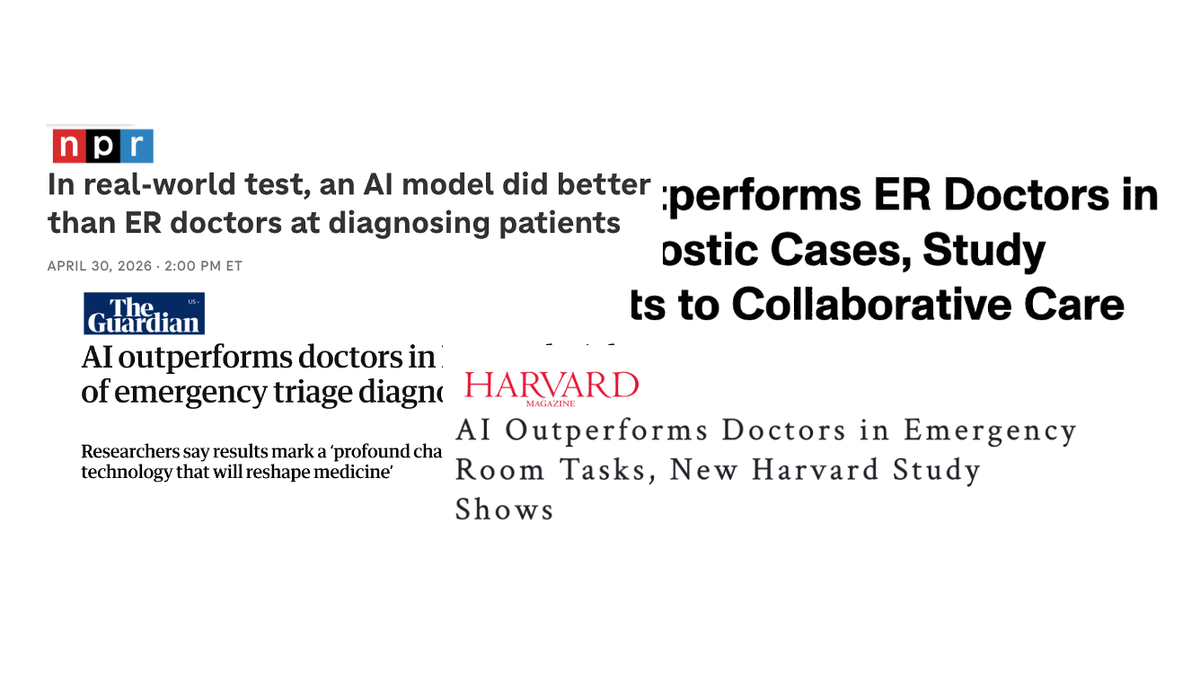

If you read the headlines, you’d come away with the impression that AI tools can now beat ER doctors at their own job — even for real patients:

Sounds impressive, right? You may be surprised to learn that all these headlines are based on results from only two physicians — who aren’t ER doctors — performing a task that isn’t really what ER doctors do.

Here’s what the study actually did.

A look into the details

The study included multiple experiments, but we’ll focus on the section making headlines: the experiment using real-world ER patient information.

They pulled medical charts from 76 randomly selected ER patients who were ultimately admitted to the medical floor or ICU, then broke the information down into three stages:

- Stage 1: Information available at ER triage (patient sex, age, primary complaint, nursing triage note, initial vital signs, etc.)

- Stage 2: Information available after ER physician evaluation (ER resident and attending notes, exam, labs and imaging studies completed in the ER, etc.)

- Stage 3: Information available after admission to the hospital (admission note from inpatient team including exam and assessment, etc.)

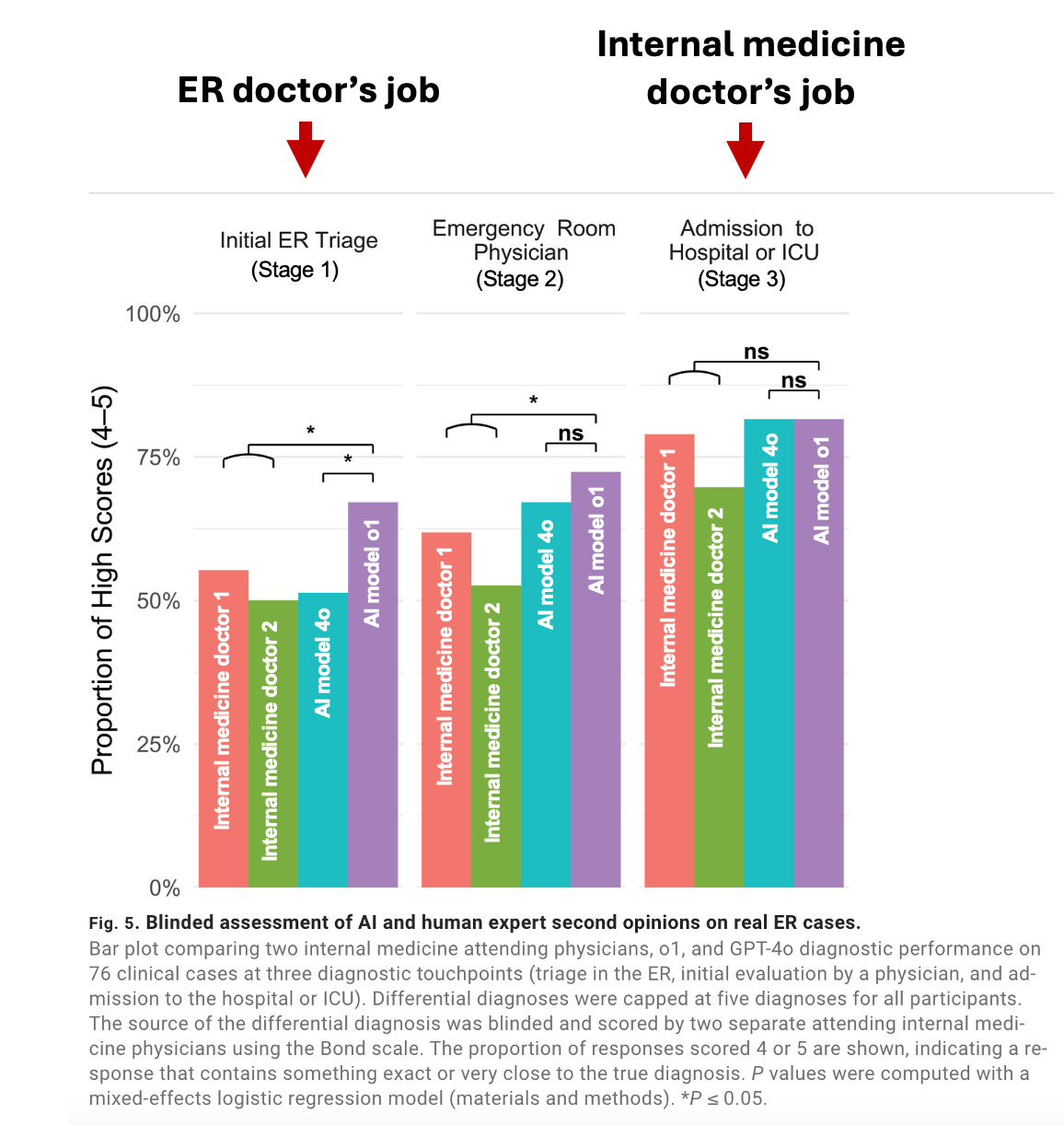

They provided this information to two large language models (LLMs) and two internal medicine physicians (not ER physicians!) The AI tools and internal medicine physicians were asked to come up with a “differential diagnosis” for each patient at each stage — a list of possible diagnoses that could explain the patients’ symptoms. They were limited to 5 possible diagnoses.

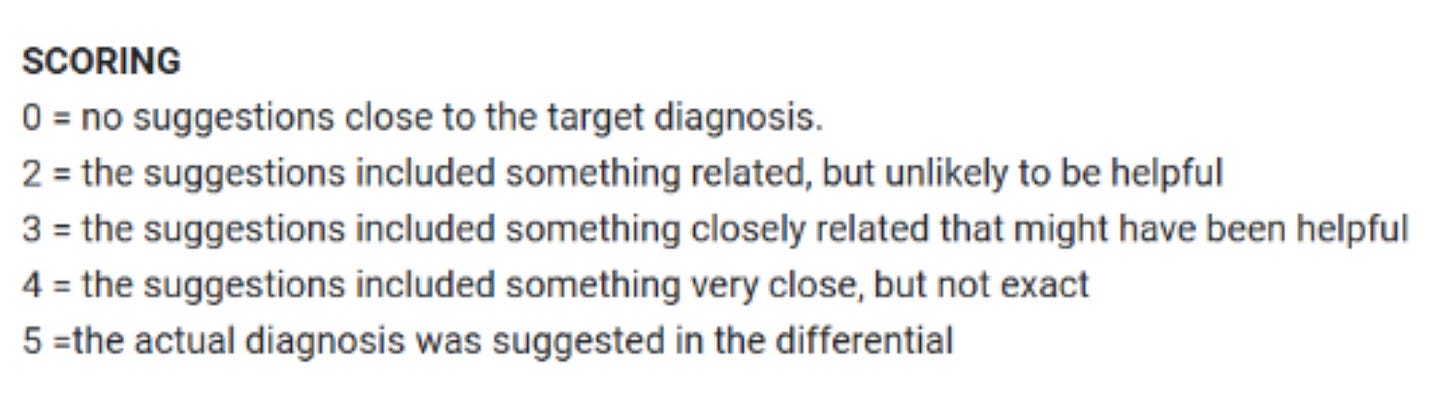

Next, a different physician reviewed the entire medical chart for each patient to determine the ultimately correct diagnosis. Using that correct diagnosis, the list of possible diagnoses generated by AI models and two internal medicine physicians were graded according to the Bond scale:

What they found

At the first two stages (ER triage and after evaluation by an ER doctor), AI model o1 was slightly more likely to have included the actual diagnosis or something very close to it (score 4 or 5) in their list of five possible diagnoses compared to the two internal medicine physicians. This is what all the headlines are raving about. There was no difference between the AI models and physicians at the point of admission (stage 3).

Why the headlines are inaccurate

To be sure, this result is an impressive feat for an AI model. But it’s not evidence that AI “did better than ER doctors at diagnosing patients” or that it “outperformed doctors in emergency room tasks,” as the headlines said.

Here’s why.

1. They didn’t test ER doctors.

If we’re going to compare AI tools to physicians’ clinical ability, we should start by comparing to physicians who actually practice that specialty. I would not be surprised if a LLM could beat a dermatologist at an neurosurgery board exam, that’s not a particularly helpful thing to know.

In this case, they evaluated internal medicine physicians instead of emergency medicine physicians. Why does this matter?

Unlike internal medicine physicians, ER physicians regularly see patients in ER triage and have substantially more practice generating differential diagnoses with very limited information (the very thing this study tested). Internal medicine doctors are incredibly smart and knowledgeable, but ER triage is not their specialty.

Notably, the two internal medicine doctors underperformed compared to the LLM in the two stages where ER doctors typically take the lead — ER triage and evaluation by the ER team. Once it got to the stage that is a regularly performed by internal medicine doctors (admission), the LLM was no better.

Not including physicians from the appropriate specialty (and only including two physicians total!) makes this result difficult to interpret. And it makes the numerous headlines claiming the AI tool “beat ER physicians” factually false — they were never tested.

2. The point of ER triage is not to “guess the final diagnosis”

However, my biggest frustration with how these results are being misinterpreted stems from a misunderstanding of what emergency physicians do. As an ER doctor seeing a patient for a first time, my primary goal is not to guess your ultimate diagnosis. My primary goal is to determine if you have a condition that could kill you.

ER differential diagnoses are purposefully biased towards life-threatening diagnoses — even when these diagnoses are rare or not the most likely. This means if you only let us choose 5 possible diagnoses for something like chest pain, our list will be biased towards rarer things that could kill you (a heart attack) over common things that are not deadly (heartburn). Because of this intentional bias, the top 5 diagnoses of an ER doctor may often be “wrong” in the sense that the ultimate correct diagnosis may not be on the list, but “right” in the sense that life-threatening diagnoses were not missed.

Said another way, the primary goal of an ER doctor is not to diagnose every patient’s condition — the primary goal is to find and diagnose the emergencies.

Instead of looking at overall diagnostic accuracy as this study did, a more useful metric for ER triage would be to look at the miss rate of deadly diagnoses by the AI tool. If an AI tool has higher diagnostic accuracy for common non-life threatening diagnoses, but a higher miss rate for rarer but deadly diagnoses, it is not a helpful one for ER triage.

3. AI was fed the work of the ER team, it didn’t do it itself

Finally, some headlines suggested the AI model outperformed ER doctors at “ER tasks,” but this misrepresents what the study did. The model didn’t perform ER tasks — it didn’t prioritize which patients should be seen first, interview patients, perform exams, or decide what tests to order. The model was fed all the work and information curated by the ER team, then asked to generate possible diagnoses.

The harm of AI hype for healthcare

The study itself was interesting and worth being published (though I wish they had a larger sample size and actually included ER physicians!) My bigger concern is how the results are being misrepresented in the media and the impact this will have on public perception of AI medical accuracy.

Overhyping the ability of AI to provide accurate medical diagnoses has the potential to do real harm by fueling overconfidence in AI tools, which may lead people to delay or avoid seeking care from an actual doctor. 1 in 3 people now turn to AI for health questions, many citing the cost of healthcare as a primary reason. Some surveys find a subset of people trust AI as much or more than a doctor for diagnosing medical conditions. But publicly available AI tools like ChatGPT Health can be dangerously wrong — missing life-threatening conditions and providing harmful recommendations. And people aren’t good at telling the difference between accurate and inaccurate AI health advice, both are rated as trustworthy.

Inaccurate headlines like the ones covering this paper feed overconfidence in AI diagnoses, and the nuances of the actual study are often lost: which model (this wasn’t ChatGPT) in what context (fed all the information from a medical record collected by an experienced ER team, not a conversation with an online chatbot) looking at what output (everything we just discussed).

My concern is that people will see these headlines — and then later when they have a medical problem, some will decide they don’t want to pay thousands of dollars for an ER visit, thinking AI tools are just as good (if not better!) than talking to an ER doctor.

Reading this headline, would you blame them?

Kristen Panthagani, MD, PhD, is completing a combined emergency medicine residency and research fellowship focusing on health literacy and communication. In her free time, she is the creator of the newsletters You Can Know Things and The Public Health Roundup. You can also find her on Instagram, Threads, and LinkedIn. Views expressed belong to KP, not her employer.